Faster data transport.

It’s not news to anyone that our most valuable resource is our time. As a developer, if you can do more in less time and deliver a more performant result, than you’ve stumbled across something worth exploring.

In this article and the next, we’ll be exploring protocol buffers and gRPC.

What is a protocol buffer?

A schema in the form of a .proto file that defines how data-structures should be serialized and deserialized.

Why do I care?

- When transported using gRPC, it’s substantially faster than JSON and XML with smaller payload sizes.

- Can acheive full-compatibility with legacy-code.

- Can be read across all the main programming languages.

- Can be used to generate tons of boiler-plate code for you.

- Created and maintained by Google.

What does a .proto file look like?

//Syntax statement specifies version.

syntax="proto3";

/* You can include an import from another proto-file here

* but, you have to use the fully-qualified path starting from

* project root. */

import "projectRoot/somePkg/myproto.proto";

//you can also organize proto files as packages

package mypkg;

//representation of a data-structure called MyMessage

message MyMessage {

/* Fields include a data-type, field-name and are assigned a tag number.

* The tag number represents the position of the field when

* the data-structure is being serialized/deserialized. */

string someFieldName = 1;

int32 someOtherName = 2;

// You can use lists/slices/arrays using the repeated key word

repeated my_list = 3;

// you can use enums too. Always specify a default for invalid/unknowns.

enum colors {

UNKNOWN_COLOR = 0;

red = 1;

blue = 2;

green = 3;

}

colors color_list = 4

}

For more information on which data-types are available check out the language-guide..

Hows it able to work with any programming language?

After writing your schema, i.e. your .proto file, you then compile it to generate a bunch of boiler-plate code. You can do this in just about any programming language you want with the following command format :

protoc --<language>_out=<outputPath> <pathToProtoFile>

Example:

protoc --go_out=. /path/to/myproto.proto

Since you can generate proto-buffers in muliple languages from the same .proto file, they will follow a uniform method of serializing/deserializing the data-structures you defined.

What do you mean full compatibility with legacy code?

Forward Compatibility means an application using a newer version of a message schema can read data it receives from an application using an older version of the same message schema.

Backward Compatibility means an application using an older version of a message schema can read data it receives from an application using a newer version of the same schema.

Full compatibility means you get both.

How? What if I need to update the fields in one or multiple messages in my .proto file? Won’t that break compatibility?

Good question. There are definitely things I feel it’s important to be aware of in terms of how this compatibility is maintained. Especially when making changes to the .proto file.

Lets take a look at some ways you would typically update a message in a .proto file.

Adding new fields to a message

Consider the change in the below message schema.

version 1 message schema

message MyMessage {

int32 id = 1;

}

version 2 message schema

message MyMessage {

int32 id = 1;

string name = 2;

}

How does this effect forward-compatibility ?

Application 1 is using the v1 schema and application 2 is using the v2 schema.

Application 1 sends a message to application 2.

Application 2 doesn’t receive an entry for name from application 1.

Therefore, application 2 sets name to the zero value for it’s type.

In this case name is a string so it’s field value is set to "".

Wait a second - How do I differentiate a missing field from a set default value?

You can’t. Therefore they can be dangerously misinterpreted.

How so?

Lets say you add a new field called account-balance to a message.

message schema v1:

message Account {

string name = 1;

}

message schema v2:

message Account {

string name = 1;

float account_balance = 2;

}

Application v1 uses message schema v1 and application 2 uses message schema v2.

When application 1 sends data to application 2, the account_balance gets set to 0 because there won’t be a field present for account-balance.

As a user of the application 2, it would be a less than pleasant surprise to get an insufficient funds notification based on this value.

With that said, it may be a good idea to implement some additional server-side logic to double-check that account-balance against a persisted source before jumping the gun and causing unnecessary alarm.

How does this a effect backward-compatibility ?

Application 2 is using the v2 schema and sends application 1 a message containing the account-balance field.

Application 1 will completely ignore tag 2, i.e. the account-balance field, because it has no idea what tag 2 corresponds with… which is the expected behavior and is totally fine.

Updating existing fields in a message

In terms of field names, it doesn’t matter at all.

Go ahead and change the field name as many times as you want.

How so?

The tag number is most important when the message is getting serialized because the tag number is what determines the position of the field value.

Note: Liberally changing the data-type or tag number of a field is a totally different ball-game than the field name. Changing data-types and/or tag numbers will almost always lead to breaking changes if there is a difference between schema versions between applications. The result of such things will more than likely lead to less-than-favorable implications.

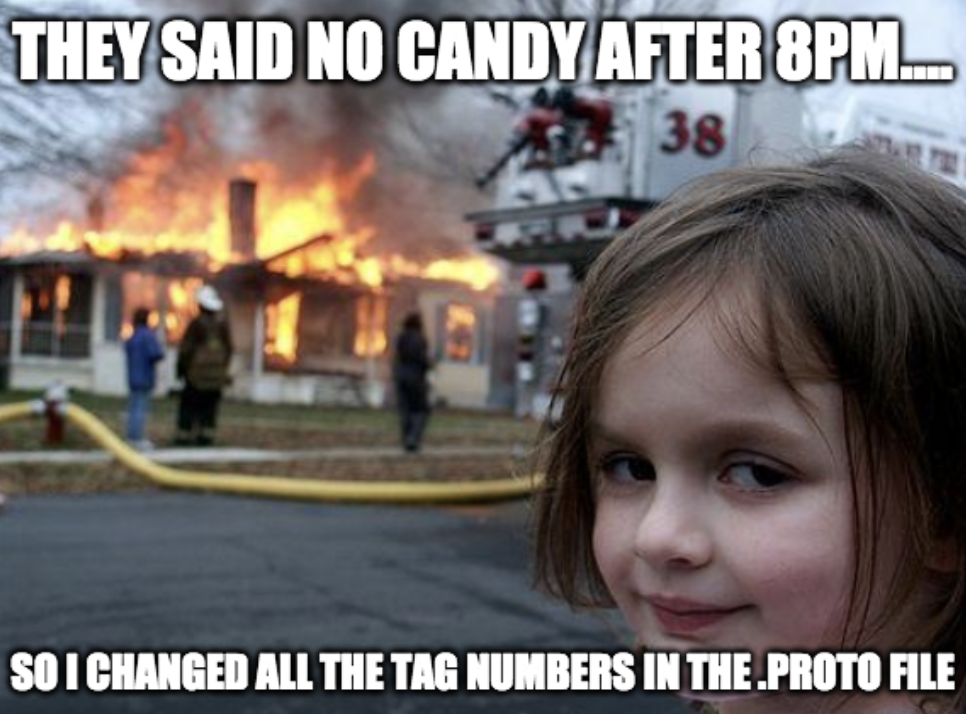

I don’t wan’t to blow up the code-base disaster girl style

No? Then avoid changing tag numbers in the .proto file. Changing data-types is complicated and I will not go into it as it’s easily avoidable by just adding new fields.

This is by no means meant to scare you away from the tech. There are tremendous upsides to using protocol-buffers. However, there are things to be cautious of and I am simply employing disaster girl to better illustrate my point. Perhaps to an exaggerated extent.

Removing fields from a message

Adding and Renaming is pretty trivial. Of the three, removing fields should employ the most attention.

Really? Why can’t I just delete unused fields?

Lets consider the changes:

message schema v1:

message MyMessage {

int32 id = 1;

string username = 2;

}

message schema v2:

message MyMessage {

int32 id = 1;

}

Now let’s assume another team member comes in and needs to add a new field. Logically, based on what they see, they will assign the new field a tag number of 2.

message schema v3:

message MyMessage {

int32 id = 1;

int64 unix_time = 2;

}

This is a problem. Every application out there that is still using the v1 schema will now receive a completely different data-type for that field. This will throw errors when unmarshalling.

Let’s assume we added a new field of the same type as the field we removed.

message schema v3:

message MyMessage {

int32 id = 1;

string email = 2;

}

This will unmarshal. However, an application using the v1 message schema will now be receiving email values where it expects usernames.

Let’s assume application 1 is using this value to set account names on a social-media like web-app. Now the user’s emails display as their usernames. Making information that they may not have wanted to publicize now open to the free-world. Not cool.

Ok so definitely don’t delete fields. How do I properly remove unused fields then?

When removing fields you should always reserve both the tag number and the field name like so :

message MyMessage {

reserved 2;

reserved "name";

int32 id = 1;

}

Reserved is a key word and it can be used on both tag numbers and field names as seen above. You can also reserve ranges of tag numbers like so :

reserved 4 to 6;

or multiple field names in the same line like:

reserved "name","email";

Reserved statements for field names and tags have to be on separate lines.

Note: Never remove reserved tags. It should be totally clear to everyone on your team which tags are reserved to prevent confusion,bugs, disaster girl etc….

I’m sold and ready to start reaping the many benefits of protocol-buffers

Sweet! You’ll want to download the compiler..

Is that it?

Not quite. To fully reap the benefits of protocol buffers and enable the “application 1 sends data to application 2” portion of the examples we’ve discussed, we’ll need to incorporate gRPC into the mix.

Think of protocol buffers as the interface for the data. Defining how it get’s serialized and deserialized.

gRPC on the other hand, also developed by Google, is the technology that is responsible for actually transporting that serialized data.

I’ll cover the different types of gRPC API’s in my next few posts.

Much love,

-Faris